PoC: KVM

The Motivation

Running Kernel-based Virtual Machine (KVM) on my local machine instead of

- Microsoft Terminal Server / Remote Desktop

- VMWare

- Proxmox (for now)

Try to use the Linux on board utilities only

Setup

System Information

vmadmin@ts01:~$ sudo -i root@ts01:~# cat /etc/os-release PRETTY_NAME="Ubuntu 25.10" NAME="Ubuntu" VERSION_ID="25.10" VERSION="25.10 (Questing Quokka)" VERSION_CODENAME=questing ID=ubuntu ID_LIKE=debian HOME_URL="https://www.ubuntu.com/" SUPPORT_URL="https://help.ubuntu.com/" BUG_REPORT_URL="https://bugs.launchpad.net/ubuntu/" PRIVACY_POLICY_URL="https://www.ubuntu.com/legal/terms-and-policies/privacy-policy" UBUNTU_CODENAME=questing LOGO=ubuntu-logo

Install and setup packages

root@ts01:~# apt install -y qemu-kvm \ libvirt-daemon-system \ libvirt-clients \ bridge-utils \ virt-manager \ cpu-checker

Libvirt (Orchestrator)

- Enable

root@ts01:~# systemctl enable --now libvirtd

- Status

root@ts01:~# systemctl status libvirtd

● libvirtd.service - libvirt legacy monolithic daemon

Loaded: loaded (/usr/lib/systemd/system/libvirtd.service; enabled; preset: enabled)

Active: active (running) since Wed 2026-02-04 19:32:47 CET; 52min ago

Invocation: de3b4269f9074f69aef468813b833a4b

TriggeredBy: ● libvirtd.socket

● libvirtd-admin.socket

● libvirtd-ro.socket

Docs: man:libvirtd(8)

https://libvirt.org/

Main PID: 26280 (libvirtd)

Tasks: 25 (limit: 32768)

Memory: 24.1M (peak: 51M)

CPU: 9.655s

CGroup: /system.slice/libvirtd.service

├─26280 /usr/sbin/libvirtd --timeout 120

├─26388 /usr/sbin/dnsmasq --conf-file=/var/lib/libvirt/dnsmasq/default.conf --leasefile-ro --dhcp-script=/usr/lib/libvirt/libvirt_leaseshelper

└─26389 /usr/sbin/dnsmasq --conf-file=/var/lib/libvirt/dnsmasq/default.conf --leasefile-ro --dhcp-script=/usr/lib/libvirt/libvirt_leaseshelper

Feb 04 20:03:14 ts01 dnsmasq-dhcp[26388]: DHCPDISCOVER(virbr0) 52:54:00:2a:f9:66

Feb 04 20:03:14 ts01 dnsmasq-dhcp[26388]: DHCPOFFER(virbr0) 192.168.122.128 52:54:00:2a:f9:66root@ts01:~# kvm-ok

Virsh (Controler)

- List

root@ts01:~# virsh list --all virsh list --all Id Name State --------------------------

- Node Info

root@ts01:~# virsh nodeinfo CPU model: x86_64 CPU(s): 24 CPU frequency: 1197 MHz CPU socket(s): 1 Core(s) per socket: 12 Thread(s) per core: 2 NUMA cell(s): 1 Memory size: 63375964 KiB

- Net List

root@ts01:~# virsh net-list --all Name State Autostart Persistent

default active yes yes

- Set Start

root@ts01:~# virsh net-start default root@ts01:~# virsh net-autostart default

- Prepare for the first Debian Image

root@ts01:~# cp /home/admin/debian-13.3.0-amd64-netinst.iso /var/lib/libvirt/images root@ts01:~# chmod 666 /var/lib/libvirt/images/debian-13.3.0-amd64-netinst.iso root@ts01:~# #Do this only on a sandbox root@ts01:~# chmod 777 /var/lib/libvirt/images

Prepare KVM User

- User admin:

vmadmin@ts01:~$ usermod -aG libvirt,kvm $USER vmadmin@ts01:~$ sudo usermod -aG libvirt,kvm $USER vmadmin@ts01:~$ less /etc/groups

- Optional start user admin in xrdp:

virt-manager

Advanced - Bridging

- advanced, make the vm bridged

root@ts01:~# sudo virsh net-define /dev/stdin <<EOF <network> <name>br0</name> <forward mode="bridge"/> <bridge name="br0"/> </network> EOF

- Start

root@ts01:~# virsh net-start br0 virsh net-autostart br0 Network br0 started Network br0 marked as autostarted

- Shutdown running VM

root@ts01:~# virsh shutdown debian13 Domain 'debian13' is being shutdown

- Edit

virsh edit debian

- Change:

<interface type='network'> <source network='default'/>

- To:

<interface type='bridge'> <source bridge='br0'/> <model type='virtio'/> </interface> Domain 'debian13' XML configuration edited.

Advanced - Converting virtual disks

Converting a VHDX Windows Disk

- Inspect the disk:

root@ts01:~# qemu-img info /home/vmadmin/vm-surf01.vhdx image: /home/vmadmin/vm-surf01.vhdx file format: vhdx virtual size: 127 GiB (136365211648 bytes) disk size: 41 GiB cluster_size: 33554432 Child node '/file': filename: /home/vmadmin/vm-surf01.vhdx protocol type: file file length: 41 GiB (43994054656 bytes) disk size: 41 GiB

- Get the disk ready for KVM

root@ts01:~# cp /home/admin/debian-13.3.0-amd64-netinst.iso /var/lib/libvirt/images root@ts01:~# chmod 666 /var/lib/libvirt/images/debian-13.3.0-amd64-netinst.iso root@ts01:~# chmod 777 /var/lib/libvirt/images

- Convert the disk

root@ts01:~# qemu-img convert -p -f vhdx -O qcow2 \ /home/vmadmin/vm-surf01.vhdx \ /var/lib/libvirt/images/vm-surf01.qcow2 (100.00/100%)

- Inspect the converting results

root@ts01:~# qemu-img info /var/lib/libvirt/images/vm-surf01.qcow2 image: /var/lib/libvirt/images/vm-surf01.qcow2 file format: qcow2 virtual size: 127 GiB (136365211648 bytes) disk size: 37.6 GiB cluster_size: 65536 Format specific information: compat: 1.1 compression type: zlib lazy refcounts: false refcount bits: 16 corrupt: false extended l2: false Child node '/file': filename: /var/lib/libvirt/images/vm-surf01.qcow2 protocol type: file file length: 37.6 GiB (40399208448 bytes) disk size: 37.6 GiB

Converting a VMDK VMWare Disk

- Inspect

root@ts01:~# qemu-img info /var/lib/libvirt/ qemu-img info 'Windows 8.x x64.vmdk'

image: Windows 8.x x64.vmdk

file format: vmdk

virtual size: 100 GiB (107374182400 bytes)

disk size: 16.7 GiB

cluster_size: 65536

Format specific information:

cid: 2432867383

parent cid: 4294967295

create type: monolithicSparse

extents:

[0]:

virtual size: 107374182400

filename: Windows 8.x x64.vmdk

cluster size: 65536

format:

Child node '/file':

filename: Windows 8.x x64.vmdk

protocol type: file

file length: 16.7 GiB (17974165504 bytes)

disk size: 16.7 GiB

- Convert

root@ts01:~# qemu-img convert -f vmdk -O qcow2 'Windows 8.x x64.vmdk' win8.qcow2

VM Installation

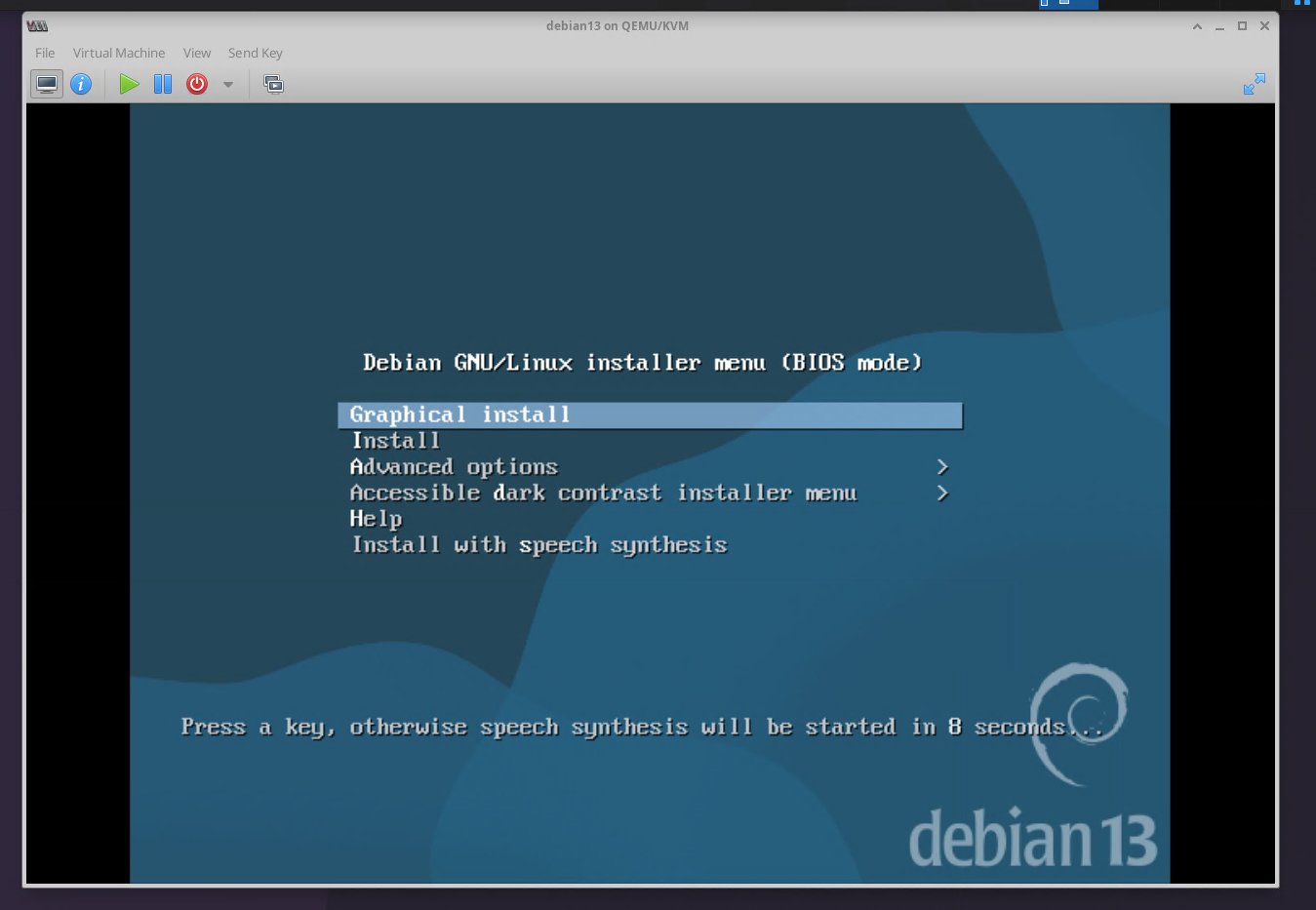

Installation Debian from ISO

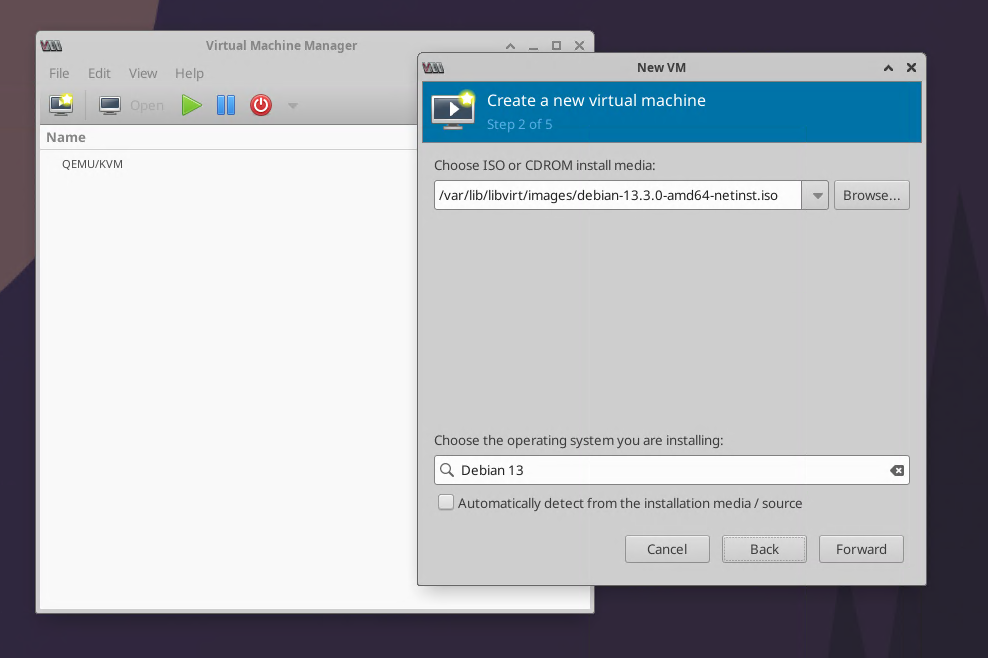

- VM Installer01

- VM Installer02

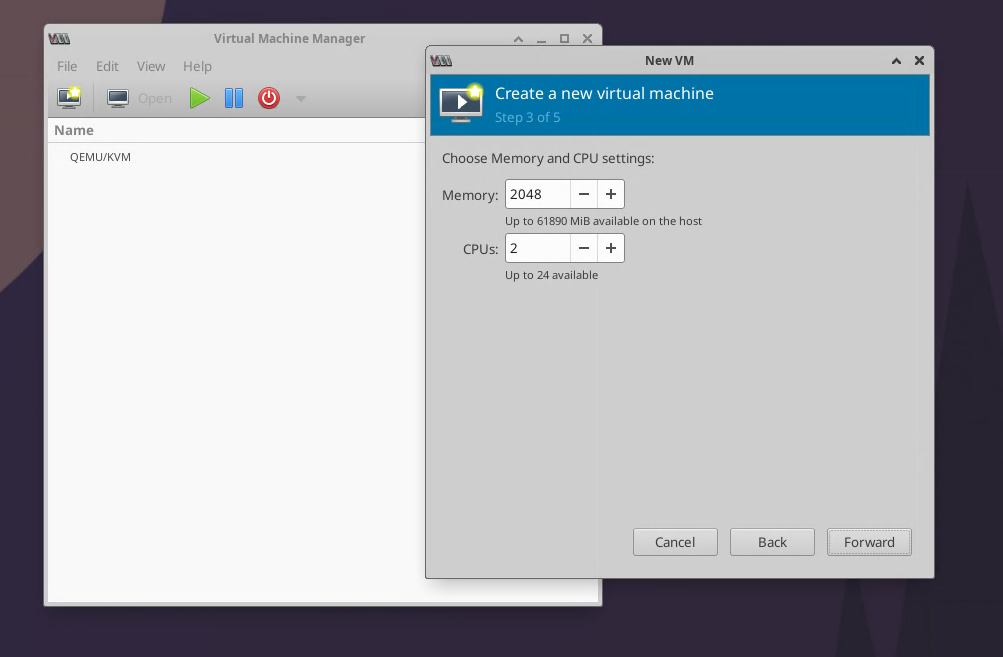

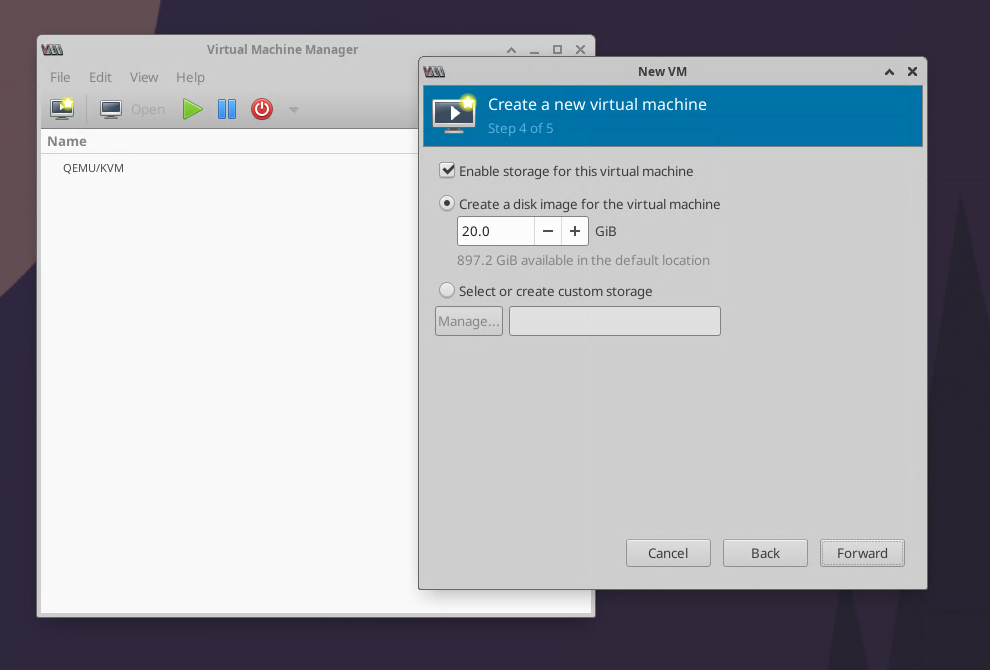

- VM Installer05

- VM Installer06

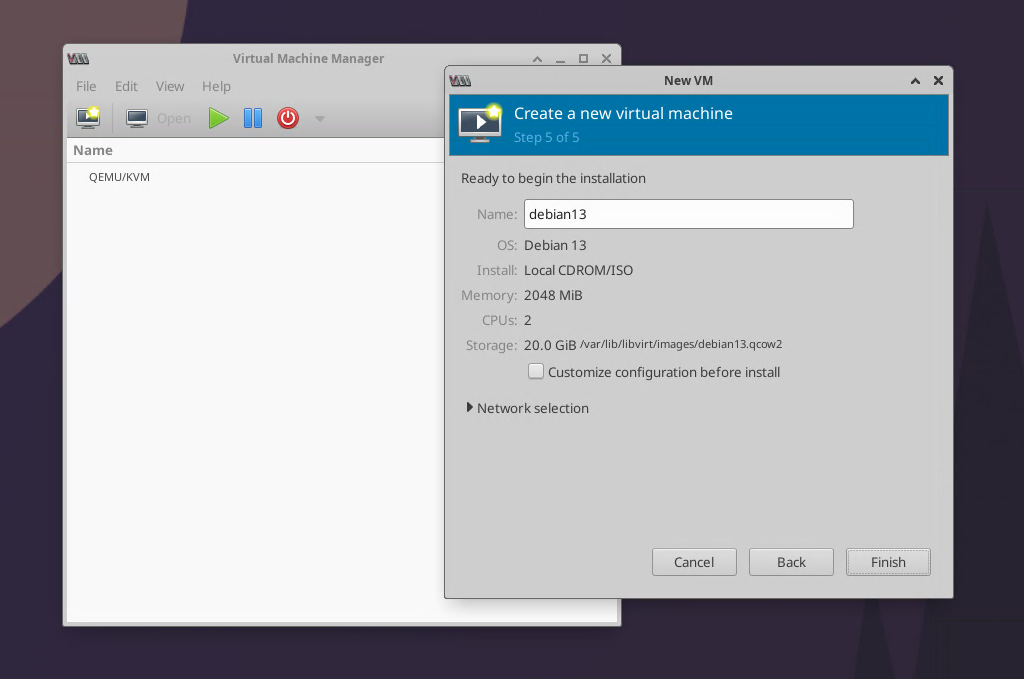

- VM Installer07

- VM Installer08

- VM Installer09

- VM Installer10

- VM Installer11

- VM Installer12

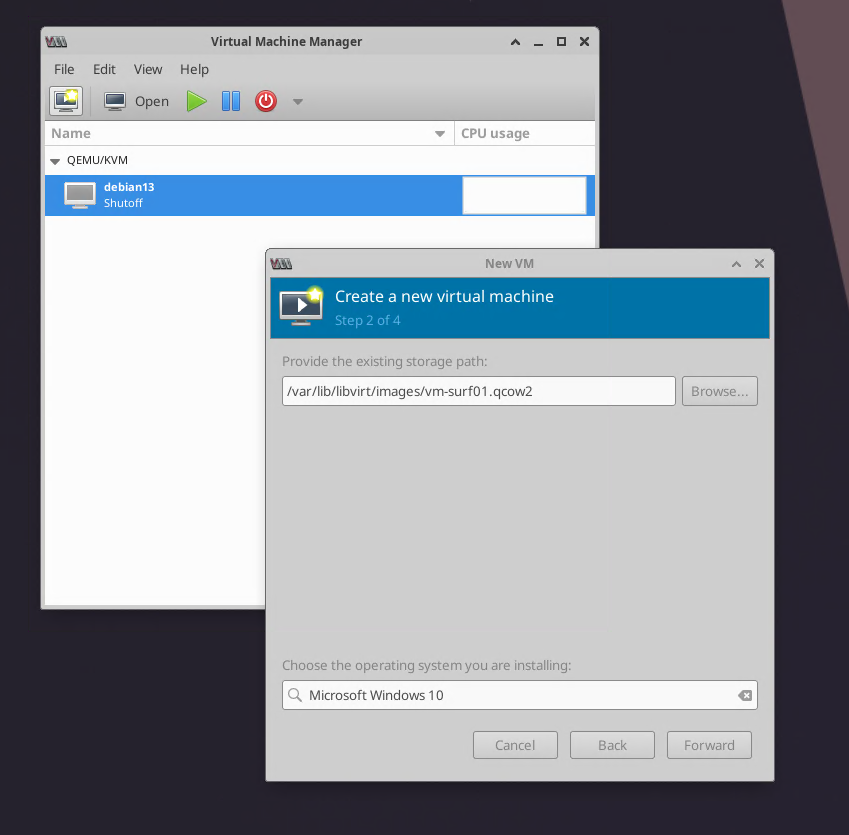

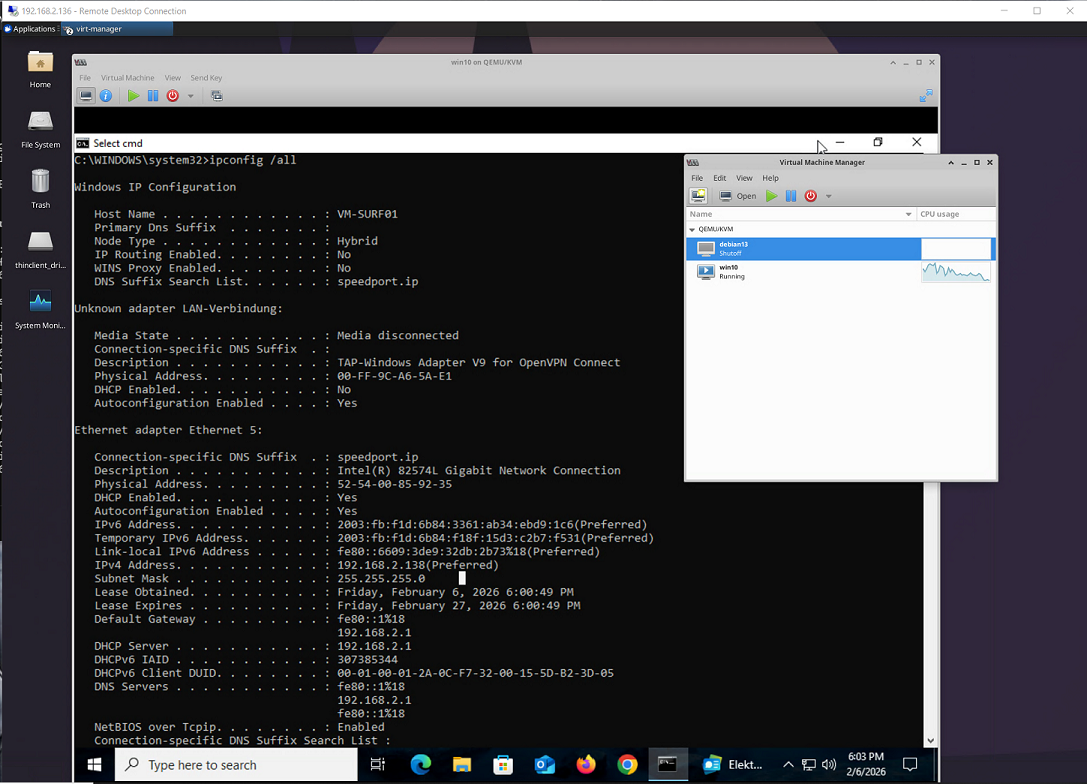

Installation Win10 VM (former vhdx file)

- Install01

- Install02

- Install03

- Install04

- Win10 in running state

Installation Win11 VM (from iso, no registration)

- Install Windows 11 but without network

- When asked for network connectivity then press Shift + F10

- Then type:

OOBE\BYPASSNRO

- After finishing the setup, shutdown

root@ts01:/data/vmtemplate# virsh shutdown win11

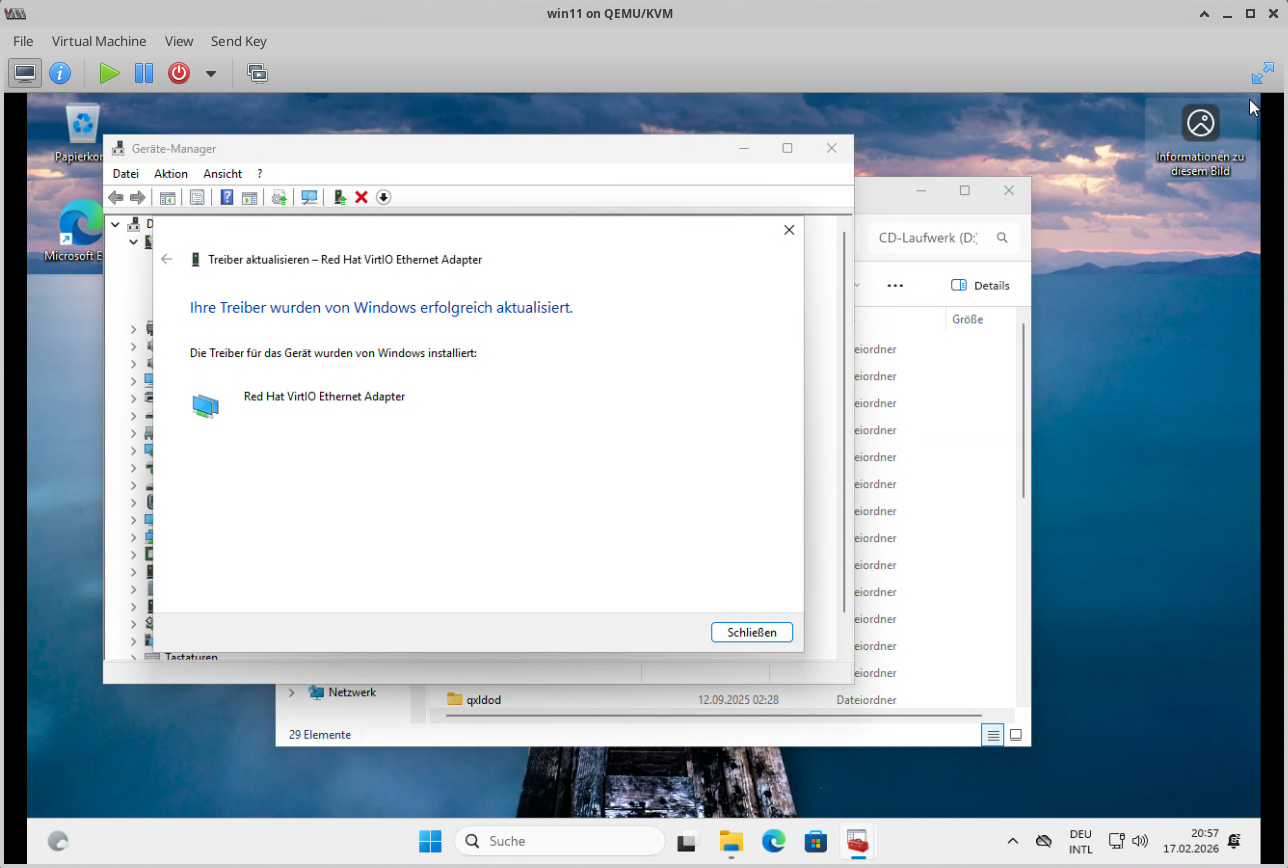

- It is required to install the network driver, but first detach the current iso image:

root@ts01:/data/vmtemplate# virsh detach-disk win11 sdb --config Disk detached successfully

- Attach the network interface

root@ts01:/data/vmtemplate# virsh attach-interface \ --domain win11 \ --type bridge \ --source br0 \ --model virtio \ --config Interface attached successfully

- Check:

root@ts01:/data/vmtemplate# virsh domiflist win11 Interface Type Source Model MAC ----------------------------------------------------------- - bridge br0 virtio 52:54:00:16:25:ee

- Download driver disk:

cd /var/lib/libvirt/images wget https://fedorapeople.org/groups/virt/virtio-win/direct-downloads/latest-virtio/virtio-win.iso

- Attach driver disk:

virsh attach-disk win11 \ /var/lib/libvirt/images/virtio-win.iso \ sdb \ --type cdrom \ --mode readonly \ --targetbus sata \ --config

- Start VM

root@ts01:/data/vmtemplate# virsh start win11 Domain 'win11' started

- From Device Manager, Install missing Driver from the new disk: NetKVM\w10\amd64

- Install

- Stop VM

- Detach Helper Disk

root@ts01:/data/iso# virsh detach-disk win11 sdb --config Disk detached successfully

- Finished

Installation Mac OS (Project from Github)

Repository Initialization

The foundation of the project relies on the OSX-KVM repository, which provides the OpenCore bootloader configurations and scripts to fetch official macOS assets.

# Clone the project repository git clone --depth 1 https://github.com/kholia/OSX-KVM.git cd OSX-KVM

Preparation Tasks

Before configuring the Virtual Machine, specific scripts must be run to acquire the macOS installation media.

Fetching macOS Assets

Run the Python script to download the Recovery image directly from Apple's servers.

./fetch-macOS-v2.py

Note: You will be prompted to choose a version (e.g., Ventura, Sonoma). This creates a "BaseSystem.dmg" file.

Image Conversion

The downloaded DMG must be converted into a raw image format that KVM can easily mount.

dmg2img -i BaseSystem.dmg BaseSystem.img

Virtual Disk Creation

Create the virtual hard drive where the OS will be permanently installed.

qemu-img create -f qcow2 mac_hdd_ng.img 128G

Host-Level Optimizations

To prevent Kernel Panics during the boot process, the KVM module must be configured to ignore unhandled Model Specific Registers (MSRs).

Permanent MSR Fix

Edit or create the KVM configuration file on the host:

sudo nano /etc/modprobe.d/kvm.conf

Add the following configuration line:

options kvm ignore_msrs=1

To apply the change without rebooting the host:

echo 1 | sudo tee /sys/module/kvm/parameters/ignore_msrs

Virtual Machine XML Configuration

The VM is defined in Libvirt using a modified XML template. Key adjustments ensure the guest OS perceives the hardware as a compatible Apple-Intel machine.

CPU Masking and OSK

The CPU must be set to 'Penryn' with an Intel vendor ID mask to bypass Apple's hardware checks.

<cpu mode='custom' match='exact' check='none'> <model fallback='allow'>Penryn</model> <feature policy='require' name='vendor_id' value='GenuineIntel'/> </cpu>

Note: The OSK (Apple System Management Controller Key) must be included in the QEMU command-line arguments section of the XML.

Storage and Firmware Paths

Ensure the following paths point to your actual local files:

- Loader: /home/vmadmin/OSX-KVM/OVMF_CODE_4M.fd

- NVRAM: /home/vmadmin/OSX-KVM/OVMF_VARS-1024x768.fd

- OpenCore: /home/vmadmin/OSX-KVM/OpenCore/OpenCore.qcow2

- BaseSystem: /home/vmadmin/OSX-KVM/BaseSystem.img

- Target Disk: /home/vmadmin/OSX-KVM/mac_hdd_ng.img

Filesystem Permissions

Libvirt runs as a separate system user and requires explicit access to files located in a user's home directory.

# Enable directory traversal chmod +x /home/vmadmin chmod +x /home/vmadmin/OSX-KVM # Change ownership to the Libvirt-QEMU user sudo chown libvirt-qemu:kvm /home/vmadmin/OSX-KVM/mac_hdd_ng.img sudo chown libvirt-qemu:kvm /home/vmadmin/OSX-KVM/BaseSystem.img sudo chown libvirt-qemu:kvm /home/vmadmin/OSX-KVM/OpenCore/OpenCore.qcow2

The Installation Process

- Format: Use Disk Utility inside the "macOS Base System" to erase the virtual disk as APFS with a GUID Partition Map.

- Install: Select "Reinstall macOS" and target the newly formatted drive.

- Bootloader Logic: Upon rebooting, the OpenCore picker will show a "macOS Installer" icon. This must be selected for all intermediate reboots (usually 3-4 times).

- Final Boot: Once the installer icon disappears, select the name of your formatted drive (e.g., "MAConKVM") to complete the user setup.

- Pictures:

File:Mac04.png

File:Mac05a.png

File:Mac04.png

File:Mac05a.png

USB Operations

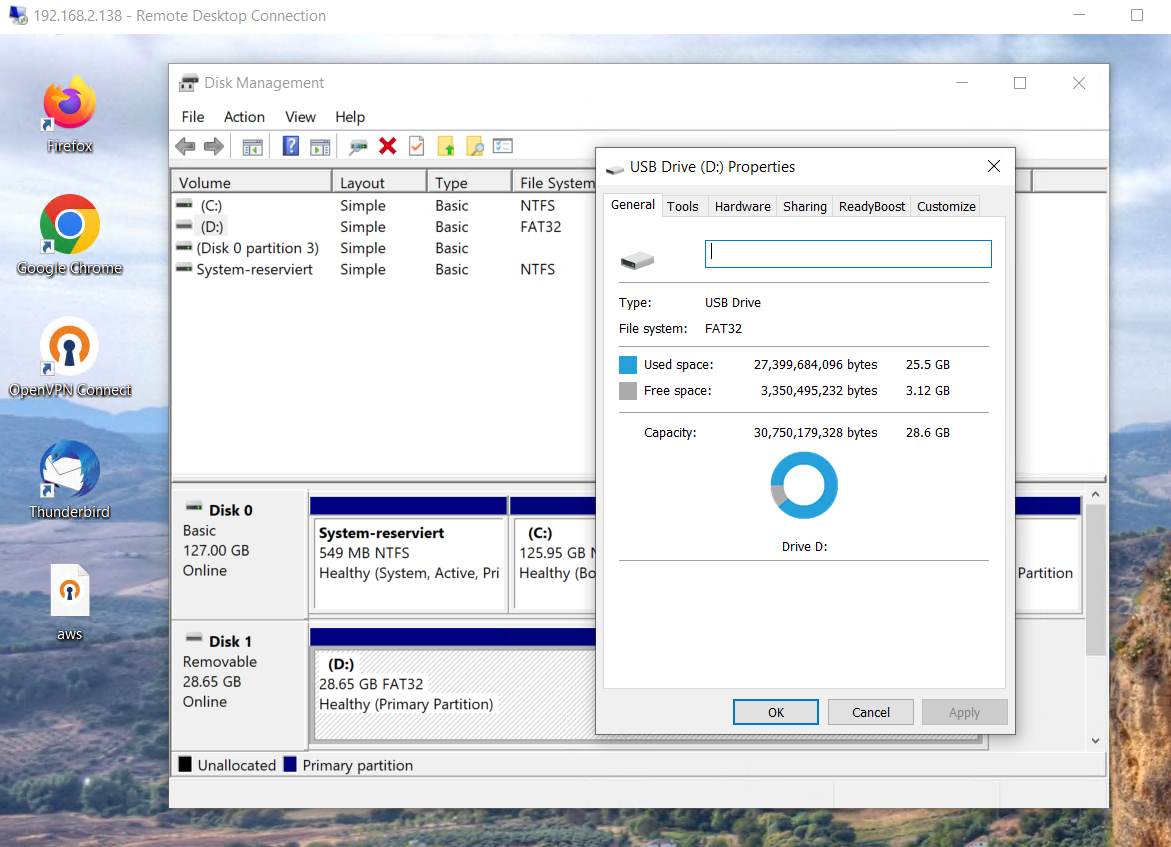

Attach a USB Stick to the VM

- Get attached devices

- Note: We're after ID 0781:5591 SanDisk Corp.

root@ts01:~# lsusb Bus 001 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub Bus 001 Device 002: ID 8087:800a Intel Corp. Hub Bus 002 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub Bus 002 Device 002: ID 3938:1032 MOSART Semi. 2.4G RF Keyboard & Mouse Bus 002 Device 003: ID 04b3:3025 IBM Corp. NetVista Full Width Keyboard Bus 002 Device 004: ID 17aa:1034 VIA Labs, Inc. USB Hub Bus 002 Device 005: ID 0bda:0129 Realtek Semiconductor Corp. RTS5129 Card Reader Controller Bus 002 Device 006: ID 046d:c52b Logitech, Inc. Unifying Receiver Bus 003 Device 001: ID 1d6b:0003 Linux Foundation 3.0 root hub Bus 003 Device 002: ID 17aa:1034 VIA Labs, Inc. USB Hub Bus 003 Device 003: ID 0781:5591 SanDisk Corp. Ultra Flair Bus 004 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub Bus 004 Device 002: ID 8087:8002 Intel Corp. 8 channel internal hub

- Attach SanDisk to win10

root@ts01:~# virsh attach-device win10 --file <(cat <<EOF

<hostdev mode='subsystem' type='usb'>

<source>

<vendor id='0x0781'/>

<product id='0x5591'/>

</source>

</hostdev>

EOF

) --persistent

Device attached successfully

- Optional dump message to investigate the current settings

root@ts01:~# virsh dumpxml win10 | grep -A10 hostdev

<hostdev mode='subsystem' type='usb' managed='no'>

<source>

<vendor id='0x0781'/>

<product id='0x5591'/>

</source>

<address type='usb' bus='0' port='4'/>

....

- Shutdown Win10

root@ts01:~# virsh shutdown win10 Domain 'win10' is being shutdown

- Start back win10

root@ts01:~# virsh start win10 Domain 'win10' started

- Get Status

root@ts01:~# virsh list --all Id Name State --------------------------- 3 win10 running - debian13 shut off

- Attached USB Stick to Win10

Detach a USB Stick from the VM

- Detach device

root@ts01:~# virsh detach-device win10 --file <(cat <<EOF

<hostdev mode='subsystem' type='usb'>

<source>

<vendor id='0x0781'/>

<product id='0x5591'/>

</source>

</hostdev>

EOF

) --persistent

Device detached successfully

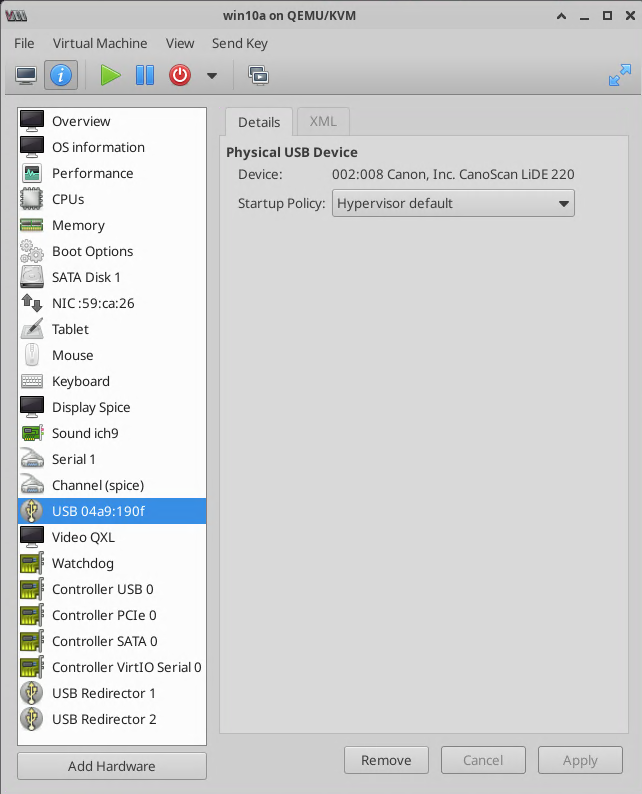

Attach a USB Scanner to the VM

- Pick the device

root@ts01:~# lsusb'' Bus 002 Device 008: ID 04a9:190f Canon, Inc. CanoScan LiDE 220

- Edit the vm setting, assume the vm is called win10

root@ts01:~# virsh edit win10

Add the usb port between the xml devices element element, like:

<devices> ... ... </devices>

- Add and save:

<hostdev mode='subsystem' type='usb' managed='yes'>

<source>

<vendor id='0x04a9'/>

<product id='0x190f'/>

</source>

</hostdev>

- Alternative edit the usb port using Virt-manager on x

Basic admin commands

Cloning a machine

Cloning win10 to win10a

- Shutdown Main

root@ts01:~# virsh shutdown win10 Domain 'win10' is being shutdown root@ts01:~# virsh list --all Id Name State --------------------------- 3 win10 running - debian13 shut off

- Clone

- Clone Process

root@ts01:~# virt-clone \ --original win10 \ --name win10a \ --auto-clone Allocating 'vm-surf01-clone.qcow2' | 127 GB 00:02:21 Clone 'win10a' created successfully.

Snapshots

- Create a snapshort

root@ts01:~# virsh snapshot-create win10a Domain snapshot 1770483334 created root@ts01:~# virsh snapshot-list win10a Name Creation Time State --------------------------------------------------- 1770483334 2026-02-07 17:55:34 +0100 running

- Revert snapshort

root@ts01:~# virsh snapshot-revert win10a 1770483334 Domain snapshot 1770483334 reverted

Hibernate

- Hibernate with managedsave

root@ts01:~# virsh managedsave win10a Domain 'win10a' state saved by libvirt

- List

root@ts01:~# virsh list --all Id Name State --------------------------- 3 win10 running 4 debian13 running - win10a shut off

- Start back

root@ts01:~# virsh start win10a Domain 'win10a' started

Reboot behaviour

- Set the following in /etc/defaultlib/virt-guests to allow a manged reboot of the vm's, means that previous running vm's are automatically started again after reboot

# What to do when the host shuts down ON_SHUTDOWN=suspend # What to do when the host boots up ON_BOOT=start # Number of seconds to wait for a guest to shut down/suspend SHUTDOWN_TIMEOUT=300

Backup/Restore of the VM settings

To Backup the VM settings only run:

virsh list --all virsh dumpxml vmname > vmname.xml

- To Restore

virsh define vmname.xml

Adding a new VM Storage

Partition the new storage

root@ts01:~# parted /dev/nvme0n1 GNU Parted 3.6 Using /dev/nvme0n1 Welcome to GNU Parted! Type 'help' to view a list of commands. (parted) mklabel gpt Warning: The existing disk label on /dev/nvme0n1 will be destroyed and all data on this disk will be lost. Do you want to continue? Yes/No? y (parted) quit Information: You may need to update /etc/fstab.

Add Filesystem

root@ts01:~# mkfs.ext4 /dev/nvme0n1p1

mke2fs 1.47.2 (1-Jan-2025)

/dev/nvme0n1p1 contains a ntfs file system labelled 'NVM'

Proceed anyway? (y,N) y

Discarding device blocks: done

Creating filesystem with 500099328 4k blocks and 125026304 inodes

Filesystem UUID: 2382c329-2c7c-4a57-855d-aea09003dd30

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208,

4096000, 7962624, 11239424, 20480000, 23887872, 71663616, 78675968,

102400000, 214990848

Allocating group tables: done

Writing inode tables: done

Creating journal (262144 blocks): done

Writing superblocks and filesystem accounting information: done

Mount Filesystem

- Make and mount

root@ts01:~# mkdir /vmstorage root@ts01:~# mount /dev/nvme0n1p1 /vmstorage

- Check

root@ts01:~# df -h Filesystem Size Used Avail Use% Mounted on tmpfs 6.1G 16M 6.1G 1% /run /dev/sda2 915G 459G 411G 53% / tmpfs 31G 0 31G 0% /dev/shm efivarfs 256K 148K 104K 59% /sys/firmware/efi/efivars tmpfs 5.0M 12K 5.0M 1% /run/lock tmpfs 1.0M 0 1.0M 0% /run/credentials/systemd-journald.service tmpfs 1.0M 0 1.0M 0% /run/credentials/systemd-resolved.service /dev/sda1 1.1G 6.3M 1.1G 1% /boot/efi tmpfs 31G 8.0K 31G 1% /tmp tmpfs 6.1G 116K 6.1G 1% /run/user/60578 tmpfs 6.1G 108K 6.1G 1% /run/user/1000 /dev/nvme0n1p1 1.9T 2.1M 1.8T 1% /vmstorage

- Get UUID

root@ts01:~# blkid /dev/nvme0n1p1 /dev/nvme0n1p1: UUID="2382c329-2c7c-4a57-855d-aea09003dd30" BLOCK_SIZE="4096" TYPE="ext4" PARTLABEL="primary" PARTUUID="f49249d9-50eb-45bd-aafc-701ef7d66f5b"

- Add to /etc/fstab

UUID=2382c329-2c7c-4a57-855d-aea09003dd30 /vmstorage ext4 defaults 0 2

- Reboot to test!

Create a new Pool and apply new VMs

- List current pool

root@ts01:~# virsh pool-list --all Name State Autostart --------------------------------- default active yes

- Create a new pool using the new vmstorage

root@ts01:~# virsh pool-define-as nvme-pool dir --target /vmstorage Pool nvme-pool defined root@ts01:~# virsh pool-build nvme-pool Pool nvme-pool built root@ts01:~# virsh pool-start nvme-pool Pool nvme-pool started root@ts01:~# virsh pool-autostart nvme-pool Pool nvme-pool marked as autostarted

- List current pool

root@ts01:~# virsh pool-list --all Name State Autostart --------------------------------- default active yes nvme-pool active yes

- Move the VM to another disk

root@ts01:~# mv /var/lib/libvirt/images/vm-win10a.qcow2 /vmstorage/vm-win10.qcow2

- Edit the vm settings

root@ts01:~# virsh edit win10a

- Then:

- set source file to:

- /vmstorage/vm-win10.qcow2

- And save

Domain 'win10a' XML configuration edited.

- To delete the pool use:

virsh pool-destroy nvme-pool virsh pool-undefine nvme-pool

Deploy a new VM via CLI

- Requires (template copy):

- /data/vmstorage/vm-bookworm.qcow2

This will setup a new VM with:

- Name: vm-bookworm

- OS Debian 13 (Trixy)

- Disk: /data/vmstorage/vm-bookworm.qcow2

- 2CPU/2GB-RAM

- Network Bridged

root@ts01:/data/vmstorage# virt-install \ --name vm-bookworm \ --memory 2048 \ --vcpus 2 \ --cpu host-passthrough \ --os-variant debian12 \ --disk path=/data/vmstorage/vm-bookworm.qcow2,bus=virtio,discard=unmap \ --network bridge=br0,model=virtio \ --graphics spice,listen=0.0.0.0 \ --channel spicevmc \ --autoconsole none \ --import Starting install... Creating domain... | 00:00:00 Domain creation completed.

Useful Commands

- Nmap accross the network to obtain new IP addresses from new vm machines

root@ts01:/var/lib/libvirt/images# nmap -sn 192.168.2.0/24

- Add a description for a VM

root@ts01:/var/lib/libvirt/images# virsh desc vmname "My Description ..." --config Domain description updated successfully root@ts01:/var/lib/libvirt/images# virsh desc vmname My Description ...

- Alternative description with carriage returns within the text

root@ts01:/var/lib/libvirt/images# virsh desc vmname --config --new-desc $'Line 1: Text123\nLine 2: Text456\nLine 3: Text789' Domain description updated successfully root@ts01:/var/lib/libvirt/images# virsh desc vmname Line 1: Text123 Line 2: Text456 Line 3: Text789

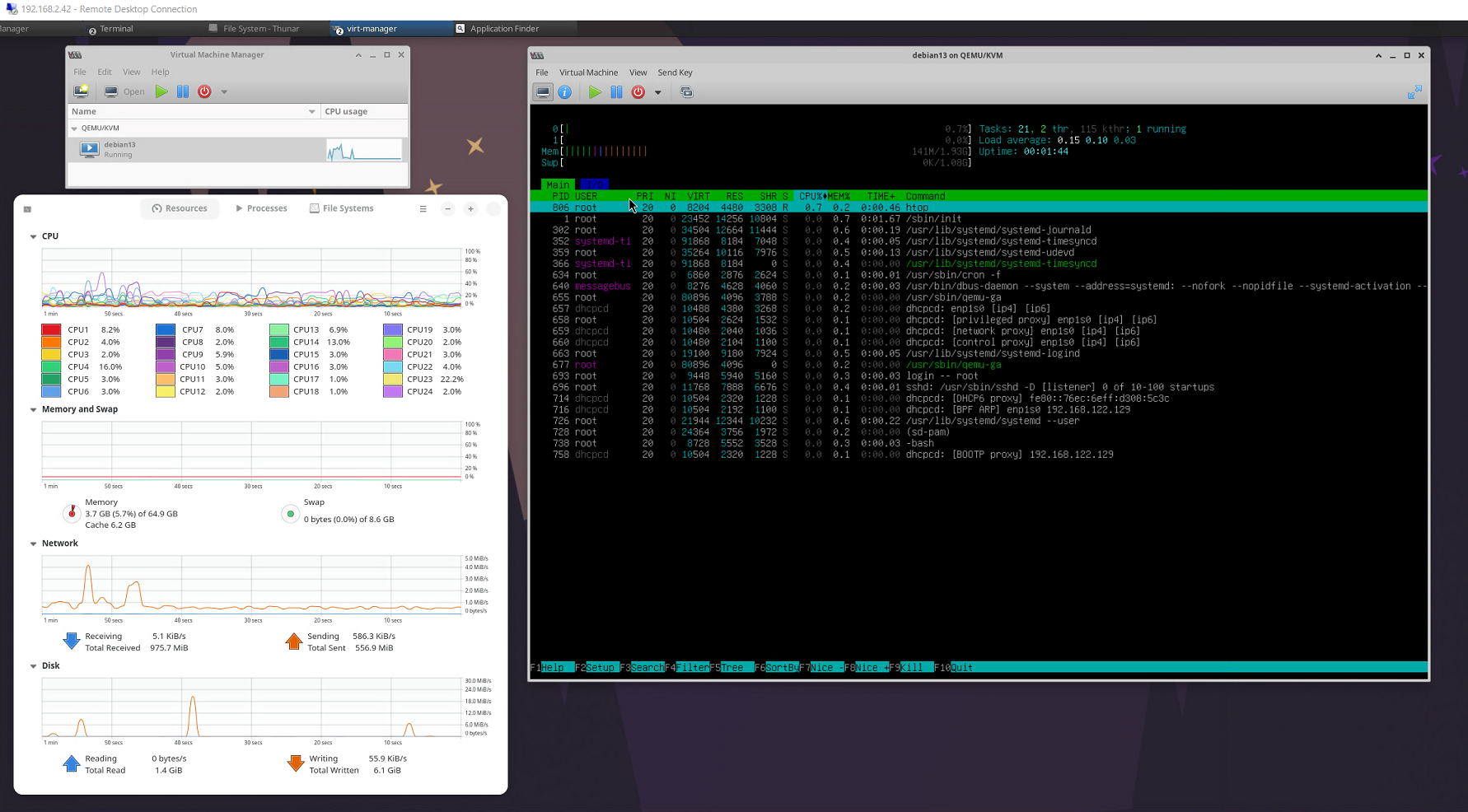

- Virtual Top

root@ts01:/# virt-top virt-top 21:20:58 - x86_64 24/24CPU 1200MHz 61890MB 6 domains, 1 active, 1 running, 0 sleeping, 0 paused, 5 inactive D:0 O:0 X:0 CPU: 0.1% Mem: 2048 MB (2048 MB by guests) ID S RDRQ WRRQ RXBY TXBY %CPU %MEM TIME NAME 10 R 0 2 1396 206 0.1 3.0 0:47.07 vm-bookworm

- Configure Autostart

virsh autostart win11

virsh dominfo win11 | grep Autostart Autostart: enable Autostart Once: disable